AP Systems Explained: Stale Data Beats Dead Servers

How DynamoDB, Cassandra, and DNS stay available during network failures—and how they clean up the mess afterward.

This is Part 3 of a 4-part series on the CAP Theorem and distributed systems trade-offs. Read Part 1 here or Part 2 here if you haven’t already done so.

In Part 2, we looked at CP Systems, datastores that would rather outright refuse requests than give the wrong answer. We covered strong consistency (otherwise known as linearizability), quorum-based writes, leader elections, and how when put together it’s expensive but right.

AP Systems make the opposite decision: “I’d rather give you something than nothing.”

Sounds pretty crazy until you realize that much of our daily internet traffic works this way. From DNS, to the CDNs powering your Netflix habit, to your social media feed, shopping cart, the list keeps going. All of these systems prioritize availability over consistency. These systems do just fine, heck they power our daily habits.

So what do we do with the inconsistency? We can’t avoid it, right? We got to manage it the best we can.

The “Always On” Philosophy

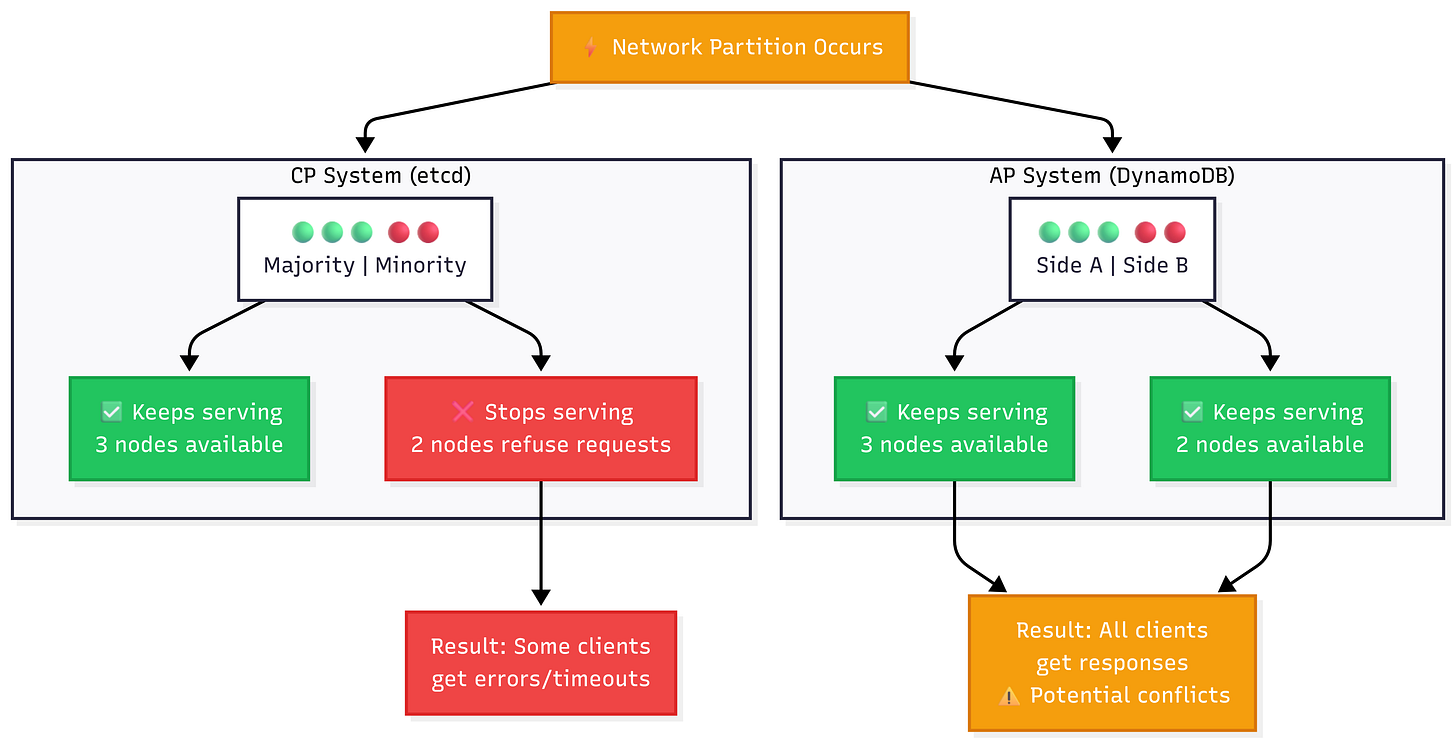

An AP system prioritizes availability over consistency, the reverse of a CP system, during network partitions. According to the CAP theorem, every non-failing node must respond to requests, even if it can’t guarantee data freshness.

Okay that’s fine and all but let’s see what that actually looks like.

Multiple Nodes Accept Writes

Unlike CP systems where all writes are funneled through a singular leader, AP systems can accept writes on multiple nodes. During a network partition, both sides of the split continue to accept writes independently. This is what makes the system available, there’s no single point of failure for writes.

Naturally, you’d ask “what if two clients write different values to the same key?” To that I’d say keep reading. We will discuss conflict resolution a little later in the article. But also, both writes would succeed but, you now have a conflict that needs to be resolved once the network failure heals.

Reads Return Local Values

There is absolutely zero coordination when it comes to reads. There’s no double-checking with other nodes, no waiting for consensus that this is the latest value. Nodes in an AP system return whatever they have, even if that data is stale.

This is absolutely okay for social media feeds (nobody cares if a “like” shows up late). But absolutely horrendous for a banking system.

Partitioned Nodes Stay Alive

This is one of the main differences between AP and CP systems. Recall, in a CP system, the minority side of a partition stops completely. No reads or writes are served from that side. In an AP system, every node continues to operate. Both sides of the partition are alive and accepting reads/writes, operating as if nothing happened.

Cleaning Up the Mess Later

CP Systems avoid conflicts by simply running every write through a leader and requiring quorum on writes. AP systems say YOLO and allow conflicts to happen. These conflicts get dealt with later on. Let’s discuss some of these conflict resolution strategies.

Last-Write-Wins (LWW)

This is the simplest approach. Each write has an associated timestamp. When two writes conflict, the one with the later timestamp wins. The other is discarded.

This is awesome for data systems where the most recent value matters:

User preferences (theme choice, language, notification settings, etc).

Status / state data (online/offline, connection state, etc).

Cache / Metadata (expiration times, modification timestamps, etc).

Social Media updates (profile bio contents, pictures, profile/cover photos).

What’s the problem with this? Timestamps in distributed systems are not really reliable. Node A’s clock might or might not be slighly ahead of Node B’s clock. The two writes that occurred at the “same time” get differing timestamps and one of them gets tossed. Data loss could happen unknowingly.

For the examples above, however, this is perfectly fine. Nobody cares about losing an intermediate GPS coordinate, or a like on a post, these don’t break anything. For as simple as LWW is its risk profile is tiny.

Apache Cassandra relies on LWW for conflict resolution by default. Amazon’s DynamoDB avoids this problem at the single-region level almost entirely by means of leader-based quorum writes. However, Global Tables (cross-region replication) rely on LWW to resolve conflicts between regions.

Vector Clocks

A vector clock, as the name suggests, is a clock….sike. It is NOT a clock reading. At all. A vector clock is a data structure that helps determine the causal ordering of events, simply put, it helps you determine whether a write happened before another, or whether they happened concurrently.

Each node maintains a vector of counters (one per node). The counters represent the nodes knowledge of how many events have occurred at every node in the system. On a local event, a node will increment its own counter. When sending a message, the node attaches its vector. When receiving an event, it merges the received vectors.

Let’s imagine a couple of situations. Imagine write A occurred before write B, you know B is newer thus we’d keep B and discard A. Imagine write A and B occurred simultaneously (neither of them caused one another). This conflict can’t be resolved by itself. This is where conflict resolution techniques come into play.

In those situations, the system needs to decide whether it will pick one and risk losing data or keep both and hand off the resolution to the application. An example of the latter is Amazon’s DynamoDB, in the original paper, DynamoDB was designed to return multiple versions of the data and let the application level logic merge them. The most famous example is Amazon’s shopping cart example, if two conflicting cart states exist, merge them and keep all of the items. It’s to be noted that modern DynamoDB dropped this entirely and opted for leader-based writes to prevent conflicts at the storage layer.

In practice, vector clocks add a level of complexity that not every team wants to deal with. Not every team wants to write conflict resolution strategies.

Conflict-free Replicated Data Types (CRDTs)

CRDTs are data structures that are designed to make concurrent updates mergeable without any conflicts. No need for coordination nor conflict resolution logic. The math behind CRDTs guarantees eventual convergence.

I’ll do my best to explain some CRDTs in the following sections.

Grow-Only Counter (G-Counter)

The simplest of all CRDTs, a G-Counter is, as its name suggests, a counter that only grows. It only supports increments.

Each node maintains its own counter. The total is the sum of all node counters. If two nodes both increment, there’s no problem, we just add them up. This is how likes work in social media.

Real World Example: Social media likes, video view counts, Reddit’s upvoting systems all use G-Counters. If two users like a post at the same time during a network partition, both increments are saved when the partition heals.

Observed-Remove Set (OR-Set)

A set that supports both addition and removal concurrently. The way this works is, every add operation gets tagged with a unique identifier (UID). A remove operation only removes UIDs it has observed. What this means is if a node has not seen an add it can’t remove it. In the simplest of terms, if an add and a remove occur on different nodes, for the remove to be successful the node performing it must have synced with every other node, to see the addition.

Real-World Example: We’ll rely on the classic shopping cart example again. Imagine two different users/sessions update the same cart. User A adds a “Laptop” and User B removes a “Laptop”. What do you think would happen? A laptop is added to the cart. Why? User B is removing the “version” of a laptop it saw in its cart. Since the add didn’t get sync’d yet, User B didn’t see the add, so it can’t remove it.

Last-Write-Wins Register (LWW-Register)

This is basically LWW as a CRDT. This CRDT only stores a single value and resolves concurrent writes by keeping the latest write, by timestamp.

Real-World Example: The easiest one is GPS tracking apps displaying a “last known location” field. A more concrete example is caches. If two nodes update a cache simultaneously, the entry with the freshest timestamp is preserved. Naturally, for a cache only one value is necessary.

Okay thats enough CRDT explanations. Now, CRDTs sound awesome (they are), but there are constraints. Not every data structure has a CRDT equivalent. They can also grow unboundedly if not properly maintained, taking up a lot of space. Plus, it doesn’t help that they don’t work for just any arbitrary logic.

“Eventually”….maybe?

Everyone has heard the term and it usually gets hand-waved to “all nodes eventually see the same data”. While that is true, it’s a bit incomplete.

What it actually means is, “if no new writes occur, all replicas will eventually converge to the same value.” Did you notice it? There’s no limit to what “eventually” could be. It could be milliseconds (ms) or minutes.

In the real world, most AP systems converge fairly quick. Amazon’s DynamoDB replication typically happens within a second across all storage locations within a region. DNS propagation can take hours. The variance is huge.

What matters is whether your application can handle that time, the inconsistency window. Some can, and some absolutely can’t.

Stronger? Eventual Consistency

Not all forms of eventual consistency are the same. Some are stronger than others. These stronger models give you more guarantees while still being weaker than linearizability.

Read-Your-Writes

If Client A performs a write, future reads from the same client are guaranteed to see that write. Other clients may or may not see the updated write just yet, but Client A is guaranteed to see the updated write. This is what users typically expect from most applications (i.e “I just posted a comment, I should be able to see it”).

Monotonic Reads

This consistency model guarantees that once a client has read a value, it will never see an older version of that value on subsequent reads. Time does not go backwards. Without this guarantee, a client can refresh the page and see new data disappear.

Causal Consistency

In this consistency model, if Operation A caused Operation B (A happens before B and B depends on A), then everyone must see Operation A before Operation B. You’ll always see them in the right order. The cause comes before the effect, everywhere (hence it’s name….causal). Imagine seeing a seething reply to a comment without seeing the comment first. That would be a bit confusing as the end user.

These consistency models are weaker than linearizability, which means they don’t take on the CAP theorem trade-offs we discussed in the CP Systems Overview. Naturally, this means that you can have these guarantees and still be highly available during network partitions.

This very clearly highlights the problem with the “pick-two” framing of the CAP theorem. Most applications don’t need true linearizability, they’re absolutely okay with high availability coupled with these stronger eventually consistent models.

Real-World AP Systems

Amazon’s DynamoDB: The Always On DB

The history of DynamoDB goes back to 2004 when Amazon’s e-commerce business suffered a few too many outages. The e-commerce business was pushing their relational databases to their limits even though they had fairly simple usage patterns. This culminated in the release of the famous Dynamo paper, which laid out the design and implementation of Dynamo, a highly available, leaderless, eventually consistent key-value store. This would later become the foundation for DynamoDB.

How DynamoDB Stays Available

Here’s what a lot of people confuse: DynamoDB the service is not the Dynamo paper.

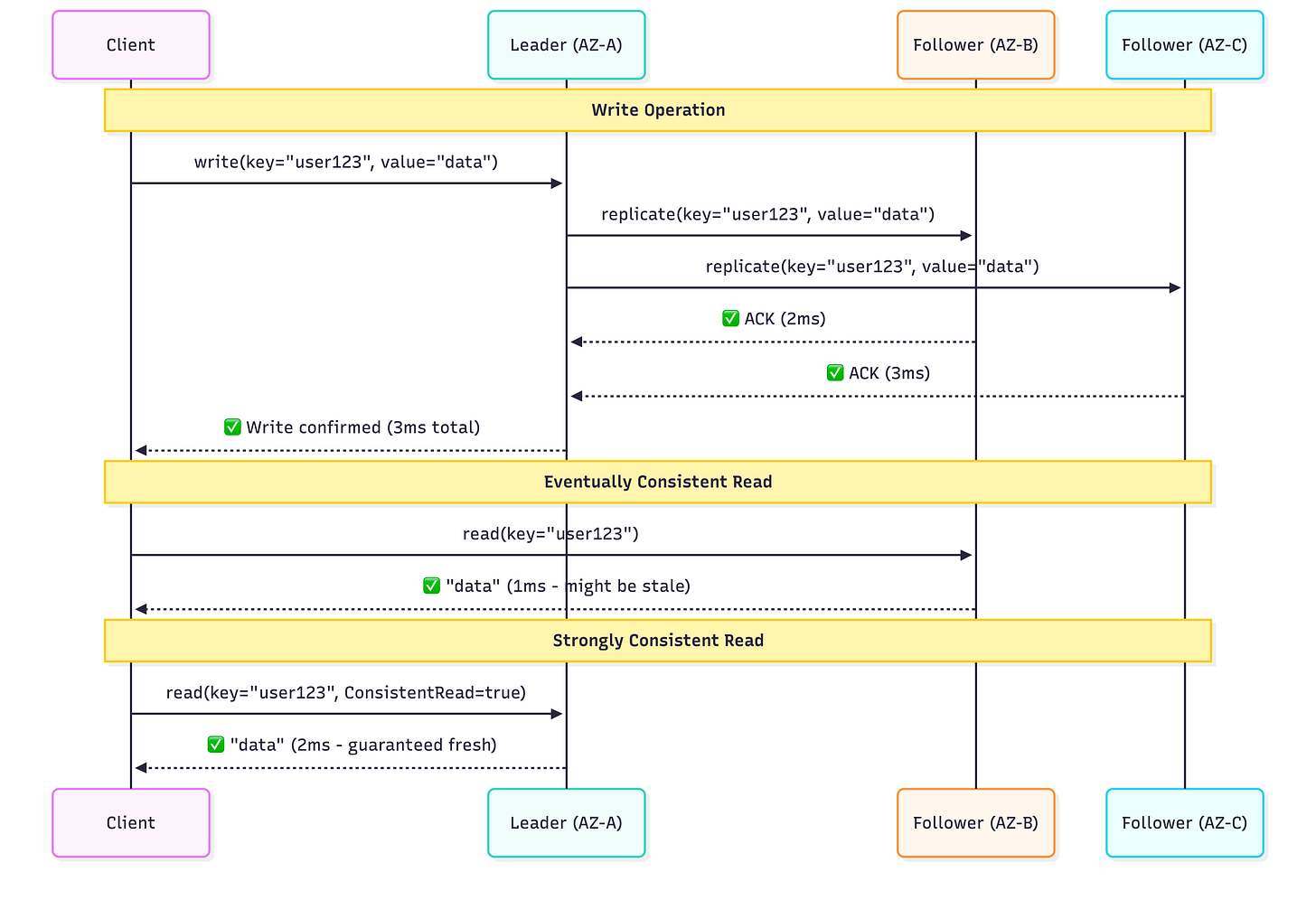

The original Dynamo paper laid out a completely leaderless system with sloppy quorum, vector clocks, and application-side conflict resolution. DynamoDB the service threw a lot of that out. DynamoDB now does Multi-Paxos based leader replica election and quorum-based writes (writes require 2/3 storage nodes to acknowledge before committing). Does that sound familiar? It should cause that’s a CP pattern.

So why is DynamoDB here, in the AP section?

Because reads are AP by default. With the default setting of eventually consistent reads, your request can be routed to any of the three replicas. There’s no coordination and no leader dependency. If the replica happens to be behind, you’ll get stale data and the system will remain up.

The Trade-Offs in Reality

By default, DynamoDB offers eventually consistent reads. Which means that you could get stale data. But DynamoDB also offers strongly consistent reads which get routed to the partition’s leader-node. Now we have the downsides of a CP system: dependence on the leader node being reachable.

DynamoDB doesn’t make a binary choice between AP and CP. It gives you a per-request option between AP and CP behavior on the read path, while keeping writes CP for durability. This is the kind of nuance that “pick two” completely misses.

For a vast majority of use cases (i.e shopping carts, user sessions, game state, IoT sensor data) the default eventually consistent reads are more than good enough. DynamoDB’s SLA promises 99.99% availability for standard tables and 99.999% for global tables. That’s less than 5 minutes of downtime per year.

Apache Cassandra: The Choose Your Own Consistency DB

Born from the need of allowing users to instantly search through inbox message history, Cassandra was Facebooks answer to a scalable, reliable, and highly available storage system. It took learnings and design decisions from its predecessors Amazon’s Dynamo (partitioning and replication) and Google’s BigTable (data and storage engine model). Open-sourced in 2008, Cassandra was built to handle ginormous write-throughput across multiple data centers.

How Cassandra Stays Available

Cassandra has no single point of failure. There is no leader. Each and every node in the cluster can serve reads and accept writes. Data is distributed similar to DynamoDB, with consistent hashing, and replicated to N nodes (the recommend amount is 3 nodes in a datacenter).

This is a proper leaderless design, unlike DynamoDB. Each and every node is an equal. Any node can serve any request.

Cassandra also lets you control the consistency per query via its tunable consistency:

ONE: read / write succeeds after one replica responds. This is the fastest and least consistent option.QUORUM: a majority of replicas (n/2 + 1 number) must respond. Slower thanONEbut more consistent.ALL: as the name suggests, all replicas must respond. This is **the slowest option and the most strongly consistent.LOCAL_QUORUM: a majority of the local replicas (locale depends on the datacenter the coordinator dispatching the job is in) must respond.

If you set the consistency level to QUORUM for both reads and writes, you’ve got yourself strong consistency. You’ve basically turned Cassandra into a CP system for that query. Set both read and write consistency to ONE and you’ve got a purely AP system. You pick the consistency, per query. Every single time.

The Trade-Offs in Reality

Cassandra relies on LWW as its default conflict resolution strategy (based on timestamps). This also opens the door for clock skew, bad time-stamping, silently allowing the wrong write to win.

Tombstones (deletion markers) can start to pile up and impact read performance if time-to-lives (TTLs), deletes, and compaction behavior aren’t managed carefully.

Tunable consistency, as awesome as it is, can also be detrimental to your system. Before using tunable consistency, you must understand each consistency level you choose. If misconfigured, you’ll pay the price in prod.

As scary as that sounded, Cassandra’s per query tunable consistency is truly where it stands out. Number of views? Use ONE , it’s fast and slightly stale data is perfectly fine. Inventory reservations? Use QUORUM , slower but it’s correct.

For time-series data, messaging systems, and really anything requiring heavy write throughput across data centers, Cassandra’s flexibility is hard to beat.

We’ve seen how DynamoDB, Cassandra, and DNS each make different architectural decisions while staying highly available and remaining on the AP side of the spectrum. Here’s how to tell whether your system should be one of them:

Go AP when:

Availability is non-negotiable*.* User-facing applications where downtime directly affects revenue, users, or both.

Stale reads are harmless. The data doesn’t change meaning even if its a few seconds or minutes behind.

You need to go global. AP systems handle multi-region deployments very well. This allows you to avoid cross-region consensus overhead.

Write throughput matters. In leaderless / multi-leader designs, multiple nodes can accept writes concurrently. Applications around event logging, IoT telemetry, time-series data, etc basically anything where a lot of writes are expected.

Be cautious of AP systems when:

Correctness is non-negotiable. Financial transactions, payments/ledgers, inventory management, etc. Anything where conflicting writes cause real-world damage.

Conflict resolution isn’t simple. If your data can’t be merged cleanly (i.e with CRDTs or well-defined rules), you’ll end up writing and maintaining custom resolution logic, something no team wants to do.

Users expect instant consistency. “I just saved my document and now it’s gone” is a horrible user experience, even if it re-appears two seconds later.

Same question I posed in Part 2, just from the opposite side of the lens: “What’s worse, being briefly wrong or briefly unavailable?”

If stale data is harmless or easily correctable, go AP. If stale data has the ability to cause real damage, go CP.

Up Next: Beyond the “Pick Two” Framing

We’ve dedicated two long posts to treating CP and AP as binary choices. They’re not.

In Part 4, the grand finale, we’ll look at how modern systems blur the line between the two:

Tunable Consistency: you saw this with Cassandra and DynamoDB.

PACELC Theorem: If during a partition you’re trading between availability and consistency, what are you trading when there is no partition?

How systems like Spanner and CockroachDB aim for C and A-like behavior and at what cost.

Practical guidance for choosing trade-offs in your own systems.

The CAP theorem provides you with a mental model. Part 4 of this series will give you the escape hatch.

DNS: The Most Important Stale Data on Earth

We mentioned DNS way back in Part 1, but it deserves a deeper look here. After all, it is the purest AP system in existence.

DNS is a globally distributed, hierarchically organized database. When you enter a URL in your browser, you kickstart a chain of reactions across multiple layers of DNS, from your recursive resolver (ISP provided, or company DNS, or public resolver), to Root, TLD, and Authoritative servers (each with the possibility of holding cached records).

How DNS Works

DNS handles billions of queries per second across millions of servers worldwide. Imagine requiring linearizability across each and every query (i.e making every query check with the authoritative server before responding). It would be insanely slow….like extremely slow. Not only would it be painstakingly slow, it would introduce a single point of failure for the entire internet. THE ENTIRE INTERNET. Nobody, and I mean absolutely nobody, would want that.

Instead, DNS relies on caching controlled by TTLs (Time-to-Live). Each DNS record has a TTL value that lets resolvers know how long to cache it before checking with the authoritative server again. Naturally, when you update a DNS record, the old record doesn’t just magically disappear. It sits cached on DNS servers around the world until the TTLs expire. This is exactly why DNS changes “take time to propagate” and most likely why your DevOps friend told you to wait after you updated an A record.

The Trade-Offs in Reality

With caching and depending on the TTL values, different users around the world can resolve the same domain to different IP addresses, sometimes for minutes and sometimes for hours. This is a completely tolerable consequence. As opposed to the entirety of DNS going down. A small window of staleness is better than making name resolution slow and failure-prone.

That’s the most important thing that DNS teaches us: most applications can tolerate a few seconds of stale data. It’s, arguably, the most critical system on the internet and runs on eventual consistency with TTLs measured in hours (hours). If the backbone of the internet can tolerate that level of staleness, your application can most certainly handle a few seconds.

When Should You Choose AP?

We’ve seen how DynamoDB, Cassandra, and DNS each make different architectural decisions while staying highly available and remaining on the AP side of the spectrum. Here’s how to tell whether your system should be one of them:

Go AP when:

Availability is non-negotiable*.* User-facing applications where downtime directly affects revenue, users, or both.

Stale reads are harmless. The data doesn’t affect the meaning of the data even if its a few seconds or minutes behind.

Being global is an end goal. AP systems handle multi-region deployments very well. This allows you to avoid cross-region consensus overhead.

Write throughput matters. In leaderless / multi-leader designs, multiple nodes can accept writes concurrently. Applications around event logging, IoT telemetry, time-series data, etc basically anything where a lot of writes are expected.

Be cautious of AP systems when:

Correctness is non-negotiable. Financial transactions, payments/ledgers, inventory management, etc. Anything where conflicting writes cause real-world damage.

Conflict resolution isn’t simple. If your data can’t be merged cleanly (i.e with CRDTs or well-defined rules), you’ll end up writing and maintaining custom resolution logic, something no team wants to do.

Users expect instant consistency. “I just saved my document and now it’s gone” is a horrible user experience, even if it re-appears two seconds later.

Same question I posed in Part 2, just from the opposite side of the lens: “What’s worse, being briefly wrong or briefly unavailable?”

If stale data is harmless or easily correctable, go AP. If stale data has the ability to cause real damage, go CP.

Up Next: Beyond the “Pick Two” Framing

We’ve dedicated two long posts to treating CP and AP as binary choices. They’re not.

In Part 4, the grand finale, we’ll look at how modern systems blur the line between the two:

Tunable Consistency: you saw this with Cassandra and DynamoDB.

PACLEC Theorem: If during a partition you’re trading between availability and consistency, what are you trading when there is no partition?

How systems like Spanner and CockroachDB aim for C and A-like behavior and at what cost.

Practical guidance for choosing trade-offs in your own systems.

The CAP theorem provides you with a mental model. Part 4 of this series will give you the escape hatch.

— Ali

References

Designing Data-Intensive Applications by Martin Kleppmann