Consistent Hashing 101

Why adding a server shouldn't ruin your entire cache.

In the CAP Theorem series, we spent a lot of time discussing what happens when nodes in a distributed system disagree or can’t communicate with each other. We covered CP systems, AP systems, and the trade-offs between consistency and availability. But we skipped over a fairly fundamental question: how does the system decide which node gets which data in the first place?

This is the problem that consistent hashing addresses. If you’ve ever used Amazon DynamoDB, Apache Cassandra, or a Content Delivery Network (CDN), you’ve been relying on consistent hashing whether you knew it or not.

The overarching goal of this post is to walk through the problem that consistent hashing solves, how it works, and where you’ll find it in production systems.

This post is part of the 101 Series on distributed systems fundamentals. If you want the next one in your inbox, subscribe here

The Problem: Modulo Hashing

Before getting into consistent hashing itself, let’s understand the naive approach first and see why it causes problems.

Let’s say you have N servers and you need to decide which server stores a given key. The simplest approach is to use modular hashing (hash the key and take the modulo of the number of servers):

server = hash(key) % NWith 3 servers, hash("user:1234") % 3 = 1, so the key goes to Server 1. This is simple, fast, and works well under stable conditions.

The problem shows up when N changes.

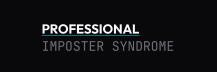

Let’s say Server 2 goes down. Now N = 2. The same key, hash("user:1234") % 2, evaluates to 0. That key has moved to Server 0. One key moving isn’t a big deal, but the issue is that almost every key moves. When N changes, the modulo result changes for the majority of keys. In a cache cluster, this means almost every cached entry becomes invalid simultaneously. Every request becomes a cache miss, and the backing database gets hit with traffic it wasn’t designed to absorb all at once.

This is referred to as a cache stampede (sometimes called a thundering herd).

Modulo hashing breaks under scaling. When Server 2 fails and N drops from 3 to 2, most keys get reassigned to a different server. Only the ones whose hash happens to divide cleanly stay put.

The math is straightforward. When you go from N to N-1 servers, approximately (N-1)/N of your keys get remapped. Remove one server from a 10-node cluster and roughly 90% of your keys move. Additionally, adding a server to handle increased load causes the exact same problem. The very act of scaling invalidates most of your cached data.

In order to scale a distributed system without this kind of disruption, we need a hashing strategy where adding or removing a server only affects a small fraction of the keys.

The Solution: The Hash Ring

Consistent hashing was introduced by Karger et al. in 1997 (notably, several of the authors went on to co-found Akamai). The core idea is to map both servers and keys onto a circular hash space, hence the hash ring.

Let’s walk through the mechanics:

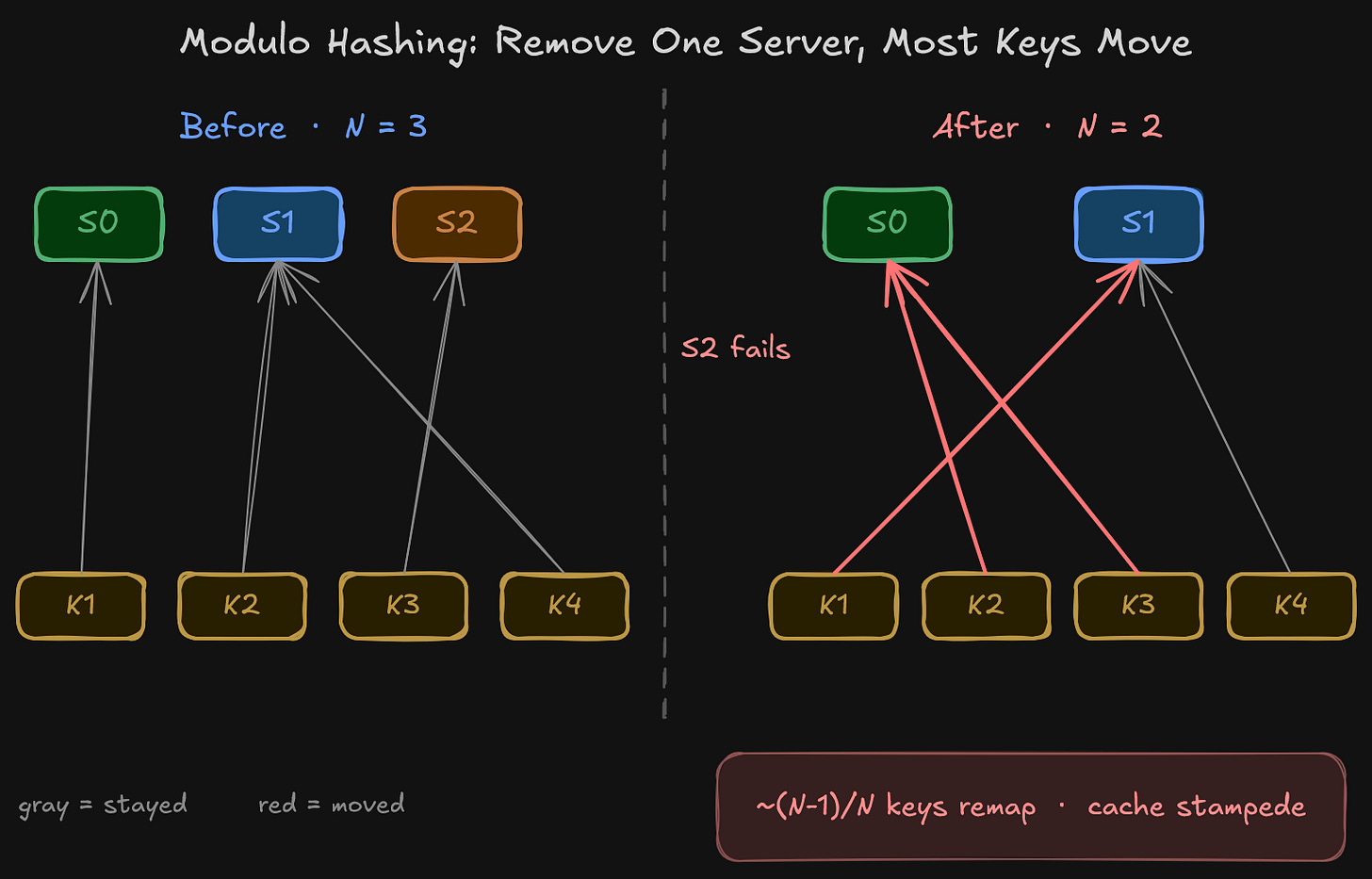

Imagine a circle representing the full range of hash values, from

0to2^32 - 1. This circle is the hash ring.Each server is hashed onto a position on this ring. The hash input is typically the server’s IP address, hostname, or some unique identifier.

Each key is also hashed onto the same ring using the same hash function.

In order to determine which server owns a given key, you start at the key’s position on the ring and walk clockwise until you reach a server. That server is responsible for the key.

Simply put, each server owns all the keys that fall between itself and the previous server on the ring (moving clockwise).

The hash ring. Servers and keys live on the same circular hash space. Each key walks clockwise to find its owner, so every key belongs to exactly one server.

Why This Solves the Remapping Problem

As mentioned earlier, the problem with modulo hashing is that changing N causes the majority of keys to move. The hash ring addresses this directly.

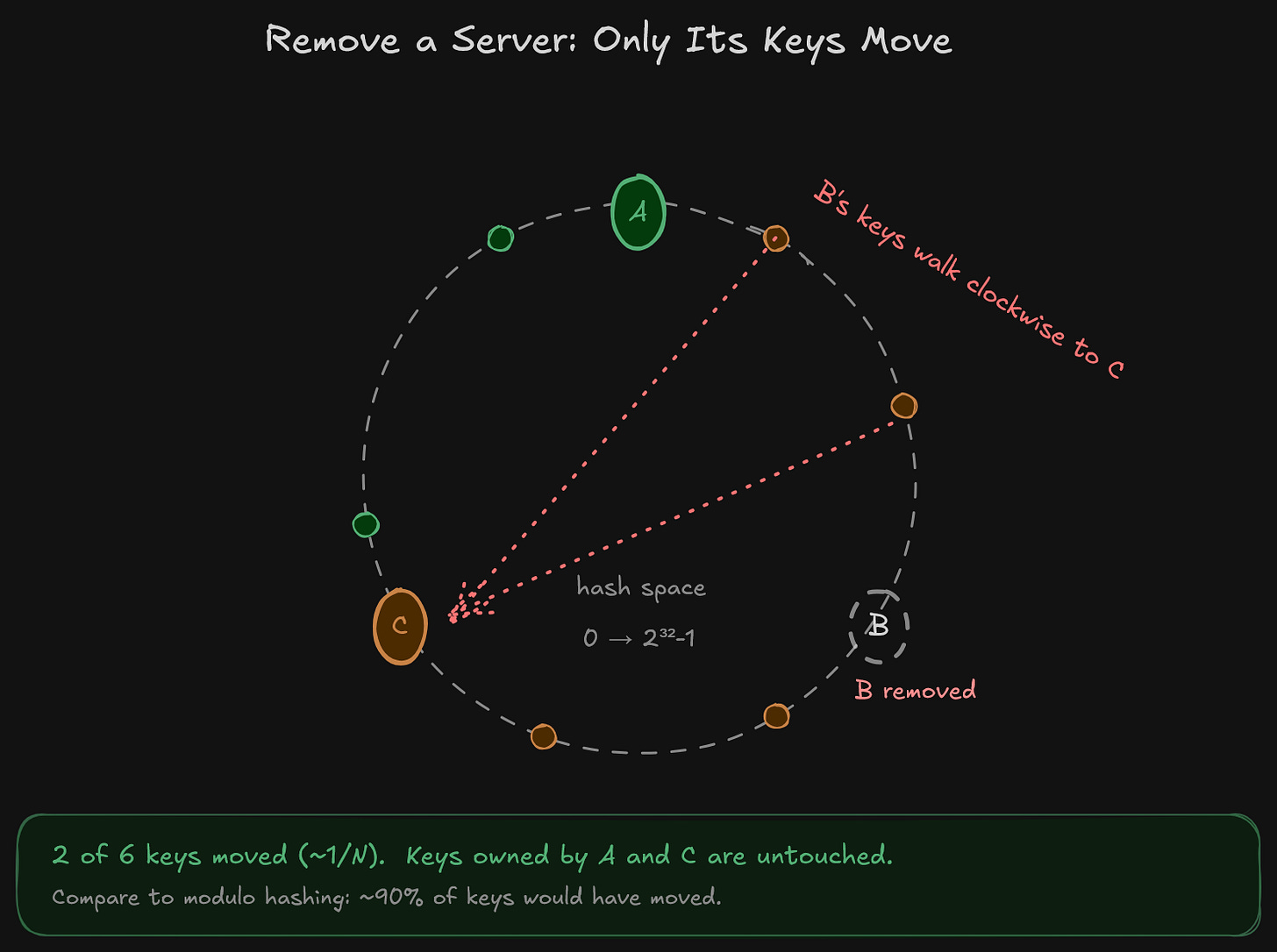

When a server is removed, only the keys that were assigned to that specific server need to be reassigned. Those keys simply walk clockwise to the next server on the ring. Every other key stays exactly where it is.

When a server is added, it takes over a portion of keys from the next server on the ring (the keys that now fall between the new server and the previous one). Again, every other key remains untouched.

Instead of ~90% of keys moving, only the keys owned by the affected server are touched. On average that works out to about 1/N of the keyspace, so removing one node from a 10-node cluster moves roughly 10% of keys instead of 90%. This is a massive improvement for cache cluster stability during scaling events.

There’s a caveat hiding in that sentence, though: on average. With only a handful of physical servers placed at random positions on the ring, the fraction actually owned by any one server can vary wildly. Remove an unlucky server and you might still shift 40% of your keys to a single neighbor. We’ll come back to this.

The Distribution Problem

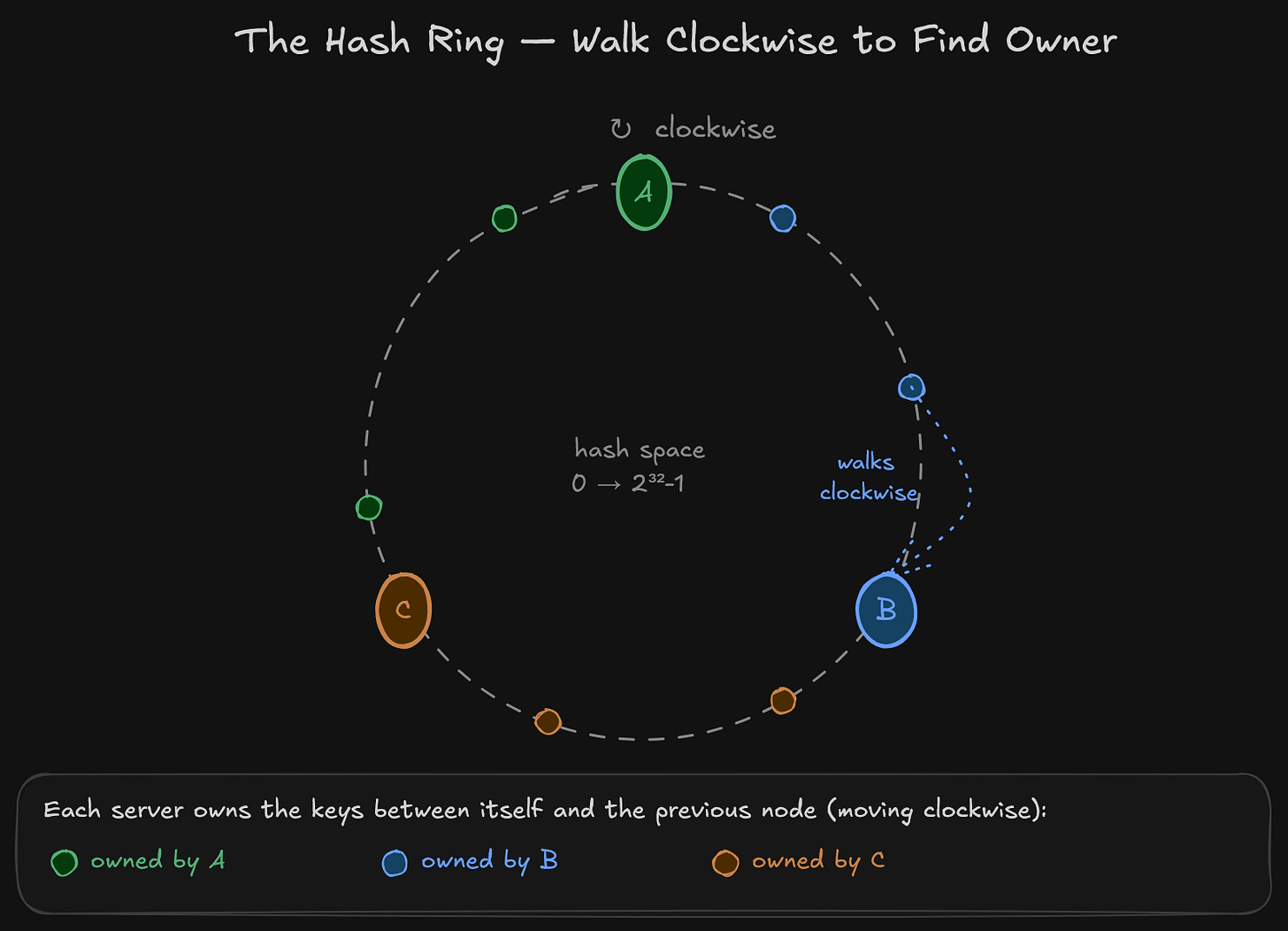

The hash ring solves the remapping problem, but it introduces a different issue. With only a few servers placed on the ring, the distribution of keys across servers is likely to be uneven. One server could own a large arc of the ring while another owns a small sliver. This means one server ends up handling significantly more keys (and therefore more traffic) than the others.

This is called hotspotting. A “distributed” system with uneven key distribution is, essentially, routing a disproportionate amount of load to a subset of its nodes.

Virtual Nodes

In order to address the distribution problem, most implementations of consistent hashing use virtual nodes (aka vnodes).

The idea is simple: instead of mapping each physical server to a single position on the ring, you map it to many positions. Each physical server gets, say, 100 or 200 positions (virtual nodes) spread across the ring. Server A doesn’t sit at one point. It sits at 200 points distributed around the ring. Same for Server B and Server C.

With more points on the ring, the distribution naturally evens out. Basically, it’s the same principle as a coin flip. Flip a coin 10 times and you might get 8 heads. Flip it 1,000 times and you’ll land much closer to a 50/50 split.

Virtual nodes also improve rebalancing behavior. When a new server is added, its virtual nodes are scattered across the ring, so it takes a small slice of keys from many existing servers rather than a large chunk from one. The load shift is gradual and spread out.

In terms of real-world defaults, Cassandra uses 256 virtual nodes per server. DynamoDB uses a similar approach internally. The number of vnodes is a configuration parameter. More vnodes means better distribution, but it also means slightly more memory for the routing table. In practice, the memory overhead is minimal and the distribution improvement is significant.

Virtual nodes also tighten the rebalancing math from earlier. The “only ~1/N keys move” claim holds reliably only when each physical node is represented by many vnodes. With just a few positions on the ring, removing a single server could shift a much larger fraction depending on where that server happened to land. Vnodes turn that probabilistic “on average” property into something concrete, something you can actually count on in production. This is the real reason Cassandra defaults to 256 vnodes per node, not just for distribution but for predictable rebalancing.

Left: with only 3 positions on the ring, one server ends up owning most of the keyspace. Right: with 4 vnodes per server (12 total), the same 3 servers share the ring evenly. Cassandra uses 256 vnodes per node in production for exactly this reason.

Consistent Hashing in Production

Consistent hashing isn’t a theoretical concept. It’s deployed in a myriad of production systems that handle data distribution at scale. Below is a look at some notable examples.

Amazon DynamoDB uses consistent hashing to distribute data across partitions. When a table grows or shrinks, DynamoDB splits or merges partitions accordingly. By virtue of consistent hashing, only the affected range of keys moves during these operations. The rest of the table remains undisturbed.

Apache Cassandra uses consistent hashing as its core partitioning strategy. Each node owns a range of the hash ring (referred to as a token range). When a new node is added to a Cassandra cluster, it takes over a portion of the ring from its neighbors. The vnodes system, as noted earlier, ensures this is spread across many small ranges rather than one large chunk.

Akamai (whose co-founders co-authored the original consistent hashing paper) uses it to route requests to edge servers in their CDN. When an edge server goes down, its traffic flows to the next server on the ring. The disruption to end users is minimal.

Memcached clients use consistent hashing on the client side to determine which cache server to read from and write to. The client hashes the key, walks the ring, and communicates directly with the correct server. No centralized coordination is needed.

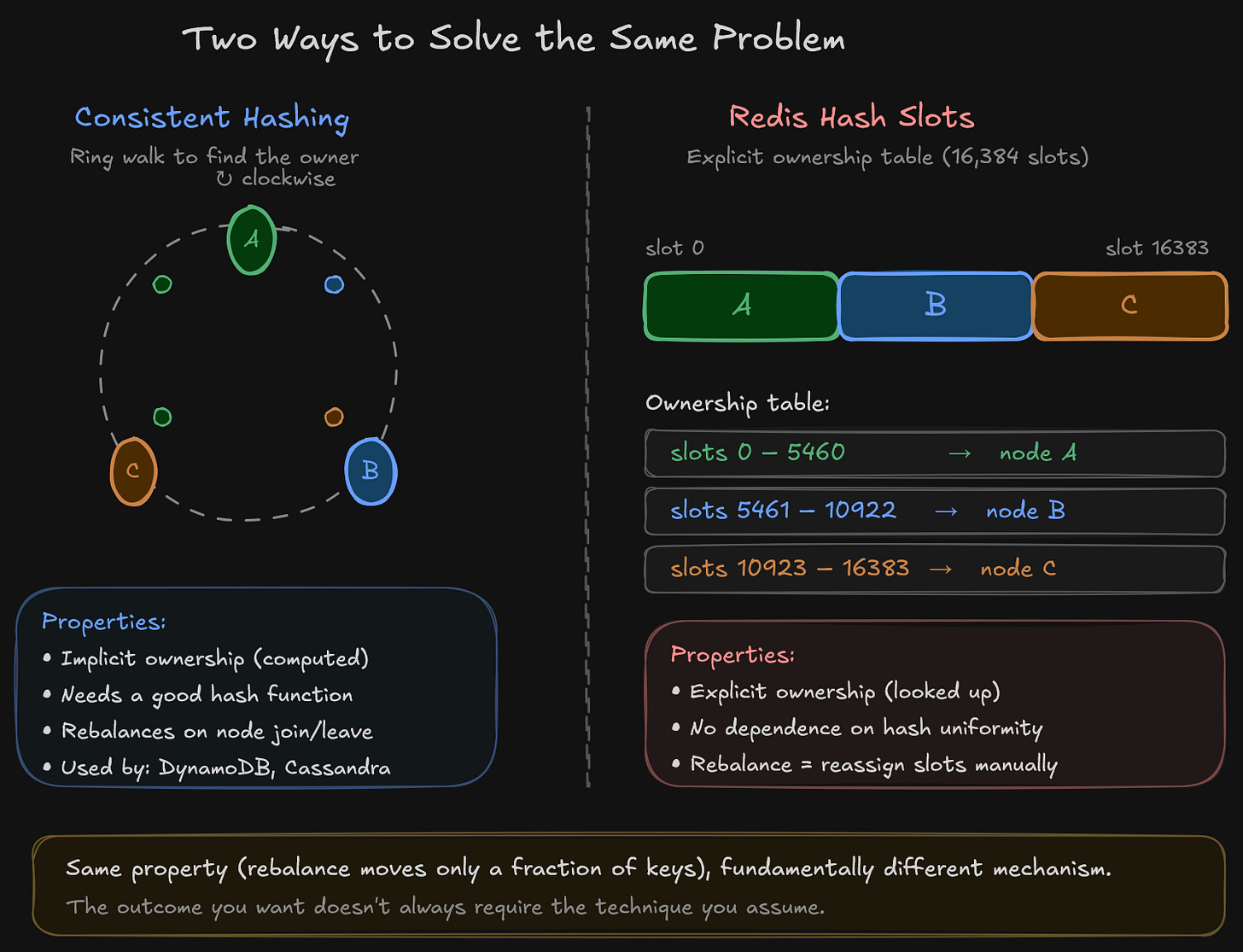

Redis Cluster uses a variant called hash slots (16,384 fixed slots distributed across nodes). This isn’t identical to ring-based consistent hashing, but the principle is the same: reassigning slots when nodes join or leave affects only a fraction of the keyspace.

Two ways to assign keys to nodes. The hash ring computes ownership implicitly by walking the ring. Redis Cluster stores ownership explicitly in a lookup table. Same rebalancing property (only a fraction of keys move), fundamentally different mechanism.

Limitations

It’s worth being honest about what consistent hashing does not solve.

It doesn’t handle replication. Consistent hashing tells you which node owns a key, but it doesn’t replicate that key to other nodes. Replication is a separate concern. Systems like DynamoDB and Cassandra layer replication on top of consistent hashing (typically by assigning each key to the next

Rnodes on the ring, whereRis the replication factor).It doesn’t guarantee perfectly even distribution. Virtual nodes help significantly, but with a small number of physical servers, some imbalance can still occur. The more physical servers and vnodes you have, the closer you get to uniform distribution.

Hash function choice matters. In order to get a good distribution on the ring, you need a hash function with good uniformity (e.g., MD5, MurmurHash, xxHash). A weak hash function can cluster positions on the ring and negate the benefits.

All in All

Consistent hashing addresses a fundamental problem in distributed systems: how to distribute data across a set of servers in a way that doesn’t fall apart when the number of servers changes. The hash ring ensures that adding or removing a server only affects approximately 1/N of the keys. Virtual nodes ensure that the key distribution across servers remains roughly even.

If you’re building or operating anything that distributes data across multiple nodes (caches, databases, CDNs, load balancers), you will encounter consistent hashing. It’s one of those foundational building blocks that countless distributed systems are built on.

What’s Next

Consistent hashing tells you which node owns a key. But what happens when a single logical dataset is too large to live on one machine, regardless of how you assign it to servers? That’s where sharding comes in: splitting a dataset into independent pieces that can live on separate machines, each with its own consistency and replication story.

That’s the next post in the series.

Ali

References

Consistent Hashing and Random Trees: Distributed Caching Protocols for Relieving Hot Spots on the World Wide Web, Karger et al. (1997). The original paper.

Dynamo: Amazon’s Highly Available Key-value Store, DeCandia et al. (2007)

Designing Data-Intensive Applications, Martin Kleppmann, Chapter 6 (Partitioning)