How OpenAI Serves 800 Million Users Without Sharding Postgres

A look at one of the most interesting engineering decisions behind one of the largest single-primary PostgreSQL deployments in the world.

OpenAI recently published a blog post titled Scaling PostgreSQL to Power 800 Million ChatGPT Users, and it’s one of those posts that I think anyone working with databases or distributed systems should read. Not because the techniques are new or groundbreaking, but because of what OpenAI chose not to do. No sharding. No fancy distributed databases. No custom storage engine. Just a single PostgreSQL primary, roughly 50 read replicas, and a lot of operational discipline.

When I first read this, I was shocked by how much restraint OpenAI showed in every decision. Oftentimes as engineers we want the fancy solution that’ll look cool and be awesome to talk about and show off, but that doesn’t mean it’s the best solution. The overarching goal of this post is to walk through what OpenAI did, why it works, and what we can take away from it as engineers building systems at scale.

Definitions and Background

Before getting into the specifics of OpenAI’s setup, let’s define a few things that’ll come up throughout this post.

Sharding: its when you split your massive database across multiple independent machines (shards), each responsible for a subset of the original data. This allows you to scale writes horizontally, but it introduces significant complexity: cross-shard queries, distributed transactions, rebalancing, and application-layer routing logic.

Write-Ahead Log (WAL): its the mechanism PostgreSQL uses to ensure durability. Every change is first written to a sequential log before being applied to the actual data files. This same log is streamed to read replicas to keep them in sync with the primary. Think of it like a shared notebook: the primary writes every change into the notebook first, and replicas read from that notebook to stay up to date.

MVCC (Multi-Version Concurrency Control): its how PostgreSQL handles concurrent access to data. Rather than locking rows during updates, PostgreSQL creates a new version of the row and lets readers continue accessing the old version. This is great for read concurrency, but it has some well-documented downsides for write-heavy workloads (more on this later).

Now that we have some necessary background, let’s look at what OpenAI actually built.

The Architecture

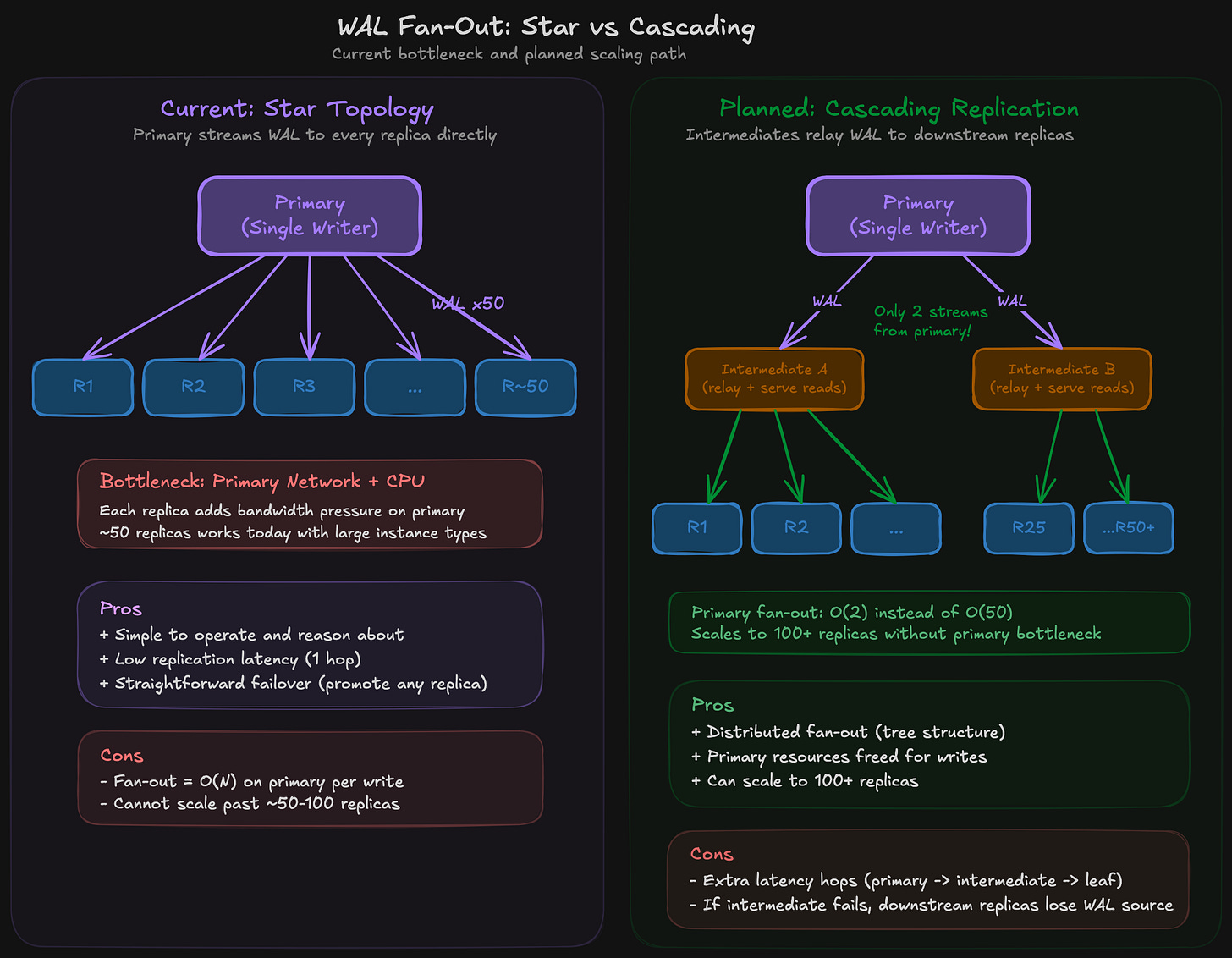

OpenAI’s core PostgreSQL deployment is surprisingly straightforward:

One primary instance on Azure PostgreSQL Flexible Server, handling all writes

~50 read replicas distributed across multiple geographic regions

PgBouncer (a connection pooling proxy) in front of every replica

A caching layer absorbing the majority of read traffic

Write-heavy workloads migrated to Azure Cosmos DB (a sharded NoSQL system)

That’s it. A single writer node handling writes for 800 million monthly active users, with reads fanned out to replicas. Their database load has grown by more than 10x in the past year, and they’re handling millions of queries per second (QPS) across the cluster.

So how does a single primary not fall over? The answer comes down to the way it’s accessed.

Why This Works

The key here is that ChatGPT’s workload is a lot more read-heavy. Think about what the typical ChatGPT interaction looks like from a database perspective: fetch user data, fetch conversation history, fetch preferences. Read after read after read. The actual writes (creating a new message, updating a session) are a small fraction of total operations.

This matters because PostgreSQL’s replication model scales reads almost linearly. Each read replica is a full copy of the database that can independently serve queries. Add another replica, absorb another chunk of read traffic. The primary doesn’t care, it just keeps streaming WAL to the replicas.

To put even more simply, if your read-to-write ratio is high enough, horizontal read scaling through replication can get you very far. OpenAI is proof of that.

Where PostgreSQL Struggles

OpenAI doesn’t hide from discussing PostgreSQL’s weaknesses, and the biggest one at their scale is the cost of writes under MVCC. The post directly references a blog that Bohan Zhang (the OpenAI engineer behind this effort) co-authored with Prof. Andy Pavlo at Carnegie Mellon University called The Part of PostgreSQL We Hate the Most.

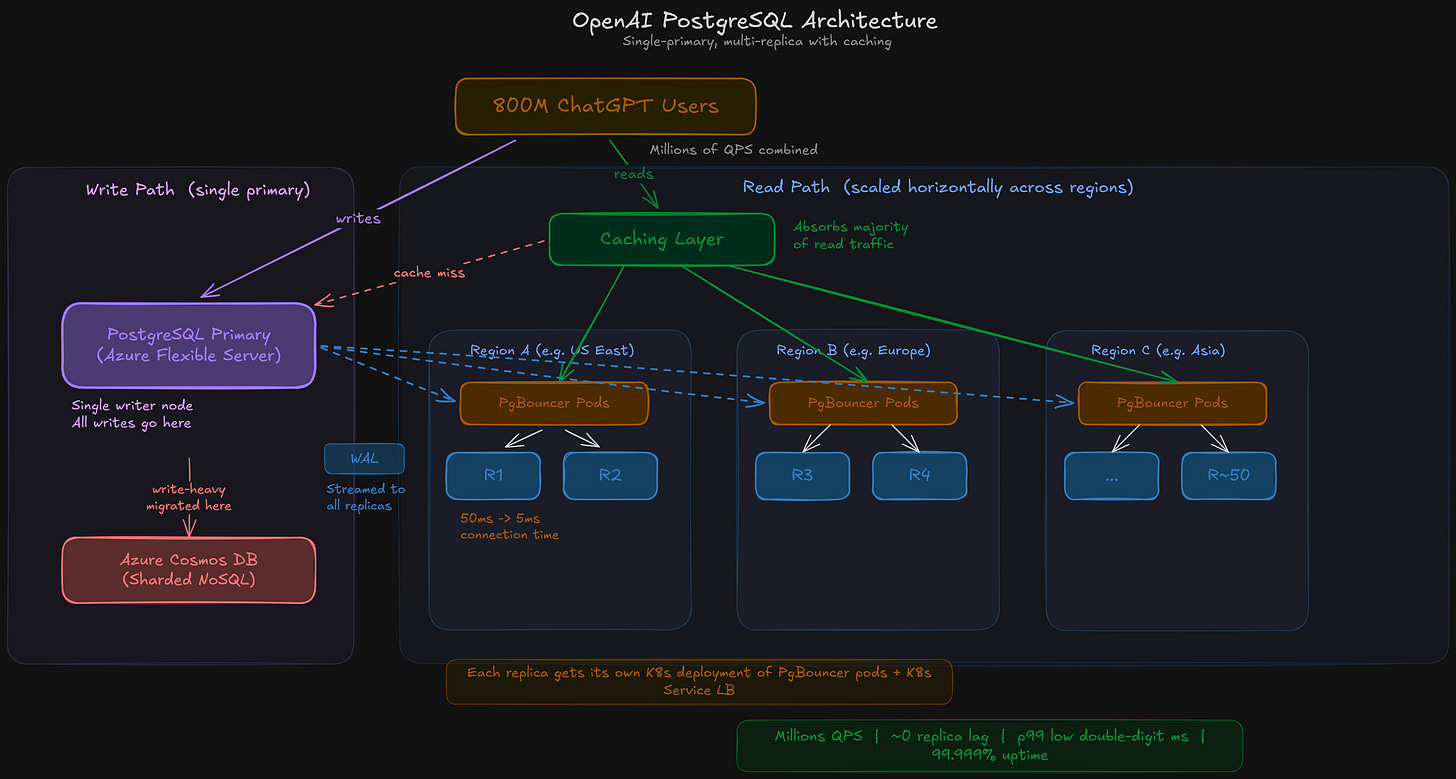

So what exactly is the problem? When PostgreSQL updates a row, even a single field, it doesn’t modify the row in place. Instead, it copies the entire row to create a new version. The old version becomes a “dead tuple” that sticks around until the autovacuum process cleans it up.

For example: imagine you have a user row with 20 columns, and you update just the last_login timestamp. PostgreSQL doesn’t touch the existing row. It writes a brand new copy of all 20 columns with the updated timestamp, and marks the old row as dead. That dead row still takes up space in the table and in every index that references it, and queries have to skip over it until autovacuum eventually reclaims it.

This design leads to several compounding issues:

Write amplification: A small logical change produces a disproportionately large physical write.

Read amplification: Queries have to scan past dead tuples to find the current version of a row.

Table and index bloat: Dead tuples accumulate in both tables and indexes, consuming storage and degrading query performance over time.

Autovacuum complexity: The garbage collector (autovacuum) that reclaims dead tuples requires careful tuning, and long-running transactions can block it entirely.

The practical consequence at OpenAI’s scale is that writes are expensive and they ripple through the entire system. More writes mean more WAL, which means more data streaming to ~50 replicas, which means more network bandwidth consumed and potentially more replication lag. One logical field change on the primary can cascade into 50x the network traffic.

OpenAI’s solution wasn’t to fix PostgreSQL’s storage engine. It was to reduce the write surface area as aggressively as possible:

They migrated shardable write-heavy workloads to Cosmos DB

They fixed application bugs that were causing redundant writes

They introduced lazy writes to smooth out traffic spikes

They rate-limited backfills (even if the process takes over a week)

They banned new tables from being added to the PostgreSQL cluster entirely

That last point is worth sitting with. They’re effectively treating the PostgreSQL cluster as a closed system for writes. Existing workloads stay, but all new workloads go to sharded systems. To keep the primary healthy, they’ve drawn a line in the sand.

The Patterns Worth Studying

Beyond the high-level architecture, there are several specific patterns in the post that are worth understanding in detail.

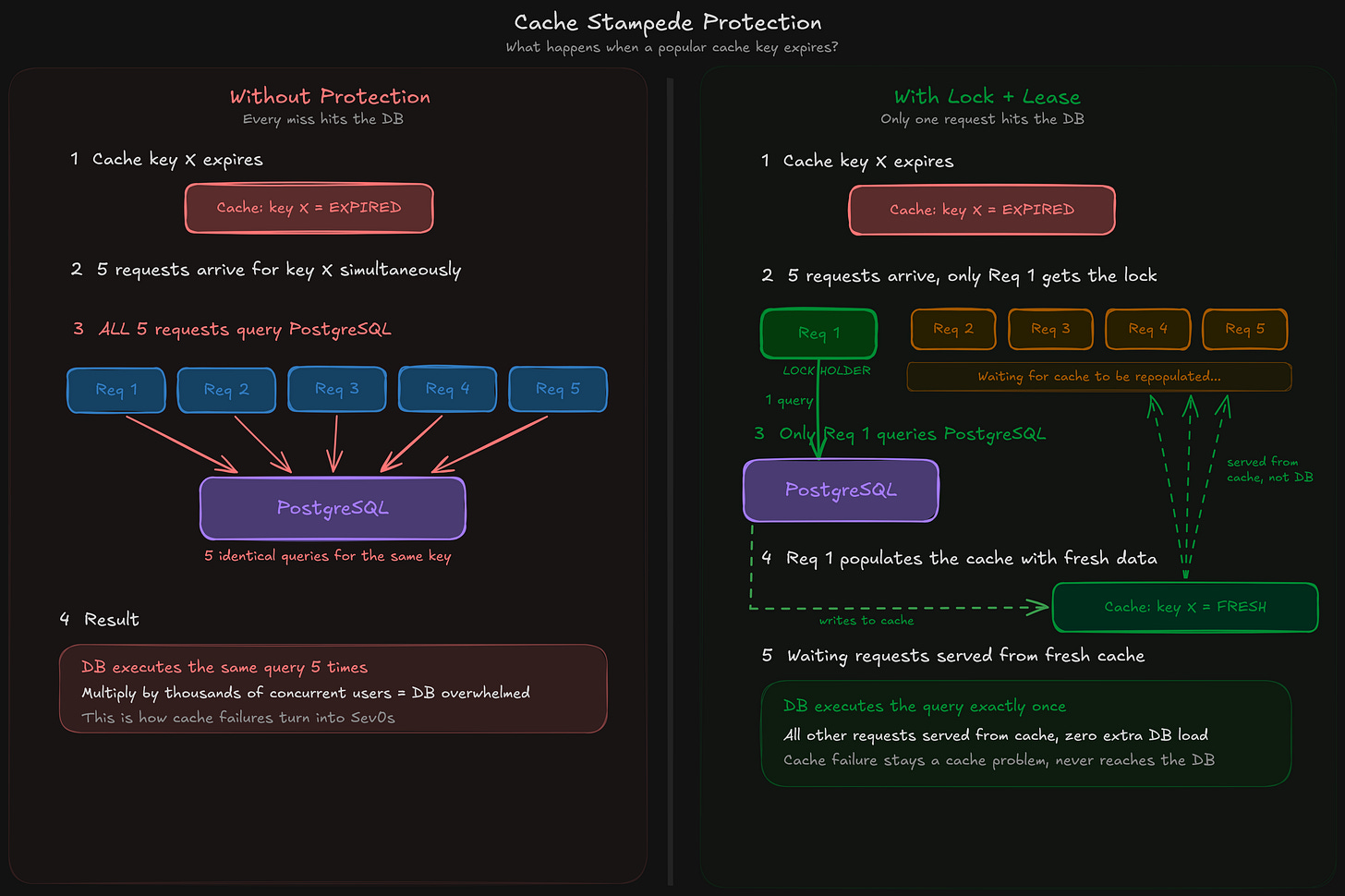

Cache Stampede Protection

When their caching layer has misses, a naive implementation would send every missed request straight to PostgreSQL, potentially overwhelming it. OpenAI implements a cache locking (and leasing) mechanism: on a cache miss for a given key, only one request acquires a lock and fetches from the database. All other requests for the same key wait for the cache to be repopulated.

Here’s an analogy. Imagine 1,000 people all try to look up the same book in a library at the same time, and the book isn’t on the shelf. Without protection, all 1,000 people walk to the back room and ask the librarian for it simultaneously. With stampede protection, one person goes to the back room, and the other 999 wait at the shelf until the book is returned and reshelved. The librarian (PostgreSQL) only gets asked once.

This pattern is sometimes called “stampede protection” or “request coalescing.” It’s one of those things that’s easy to skip during initial implementation and then regret during your first major cache failure. If you’re building any system with a caching layer in front of a database, this is worth implementing early.

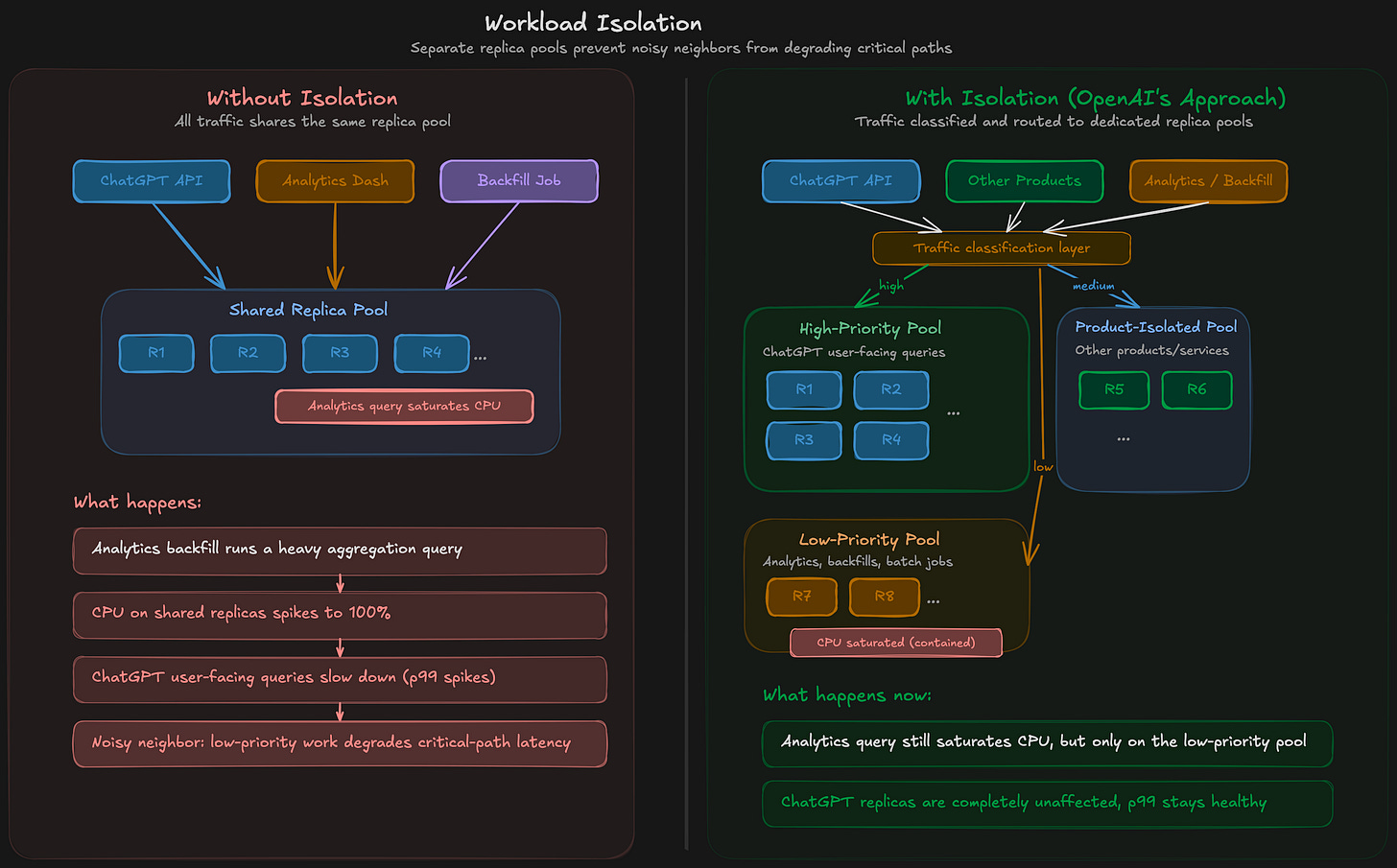

Workload Isolation

They split traffic into low-priority and high-priority tiers and route them to separate replica pools. This prevents the “noisy neighbor” problem, where an expensive analytical query or a poorly optimized new feature can saturate CPU and degrade latency for critical-path requests. They apply the same isolation across different products and services as well, so that activity from one product doesn’t affect the performance of another.

Basically, this is the same principle as Quality of Service (QoS) in networking: classify traffic, isolate resource pools, and make sure lower-priority work can’t starve higher-priority work.

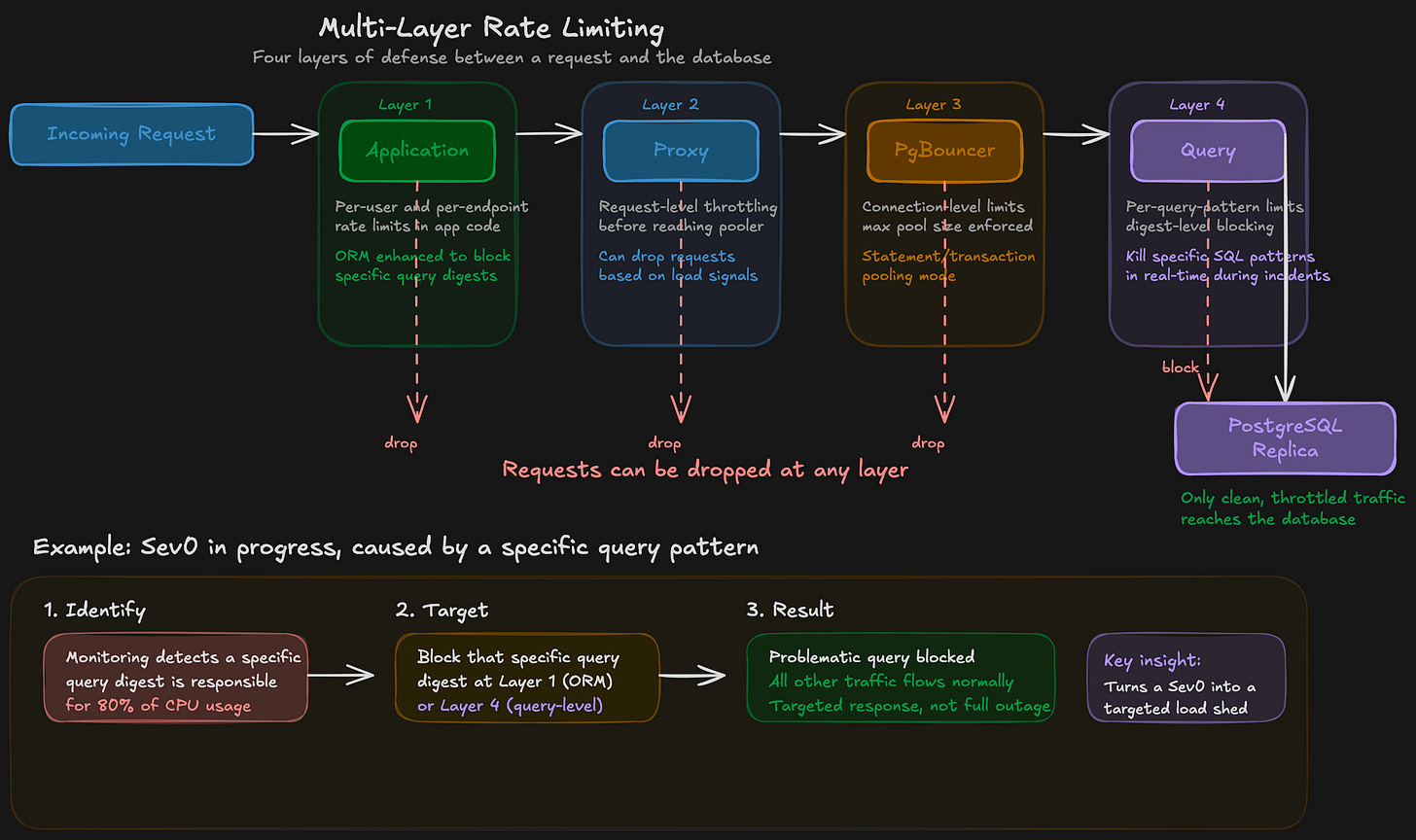

Multi-Layer Rate Limiting

Rate limiting at the application layer alone isn’t sufficient. OpenAI implements rate limiting at four layers: application, connection pooler, proxy, and query. They even enhanced their ORM (Object-Relational Mapping) layer to support blocking specific query digests for targeted load shedding.

The ability to identify and kill a specific problematic query pattern in real-time during an incident is a powerful operational tool. It turns what would be a full-service degradation into a targeted response.

The 12-Table Join That Caused Sev0s

One of the more memorable details: they identified an extremely costly query that joined 12 tables, and that spikes in this query were directly responsible for past Sev0 incidents. Their takeaway is to avoid complex multi-table joins in OLTP (Online Transaction Processing) workloads, and to break them into multiple simpler queries with the join logic handled in the application layer.

This is a cautionary tale about ORMs. ORM frameworks make it easy to define relationships between models and then traverse them in application code. Under the hood, many ORMs eagerly join across those relationships, producing SQL that no human DBA would write. If you’re using an ORM in a high-traffic system, it’s important to carefully review the actual SQL being generated, not just the ORM code.

Connection Pooling

Each Azure PostgreSQL instance has a maximum connection limit of 5,000. At OpenAI’s scale, it’s easy to run out of connections or accumulate too many idle ones. They’ve had incidents caused by connection storms that exhausted all available connections.

To address this, they deployed PgBouncer as a proxy layer to pool database connections, running it in statement or transaction pooling mode. This allows efficient reuse of connections and greatly reduces the number of active client connections. In their benchmarks, average connection time dropped from 50 milliseconds to 5 milliseconds. That’s a 10x improvement just from connection pooling.

Each read replica gets its own Kubernetes deployment running multiple PgBouncer pods, with a Kubernetes Service load-balancing traffic across them. They also co-locate the proxy, clients, and replicas in the same region to minimize network overhead.

The Decision Not to Shard

Perhaps the most interesting engineering decision in the entire post is the one they didn’t make: sharding PostgreSQL.

They explicitly state that sharding existing workloads would require changes to hundreds of application endpoints and could take months or even years. Instead, they chose to:

Optimize the single-primary architecture as far as possible

Migrate new write-heavy workloads to already-sharded systems (Cosmos DB)

Gradually migrate existing write-heavy workloads off PostgreSQL over time

This is a more rational decision. Sharding introduces enormous complexity: cross-shard queries, distributed transactions, rebalancing, application-layer routing, and schema migration coordination across shards. For a read-heavy workload where the single primary still has headroom, the operational cost of sharding doesn’t justify the benefit.

OpenAI isn’t saying sharding is bad. They’re saying sharding isn’t worth it for them, right now, given their access patterns and the engineering cost of migration. They’re not ruling it out for the future, but it’s not a near-term priority.

The Scaling Ceiling

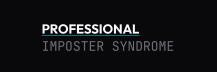

Star vs. cascading replication topologies for WAL fan-out. In the current star topology (left), the primary streams WAL directly to every replica, creating an O(N) network bottleneck. In the planned cascading topology (right), intermediate replicas relay WAL to downstream nodes, reducing the primary’s fan-out to O(2) and enabling 100+ replicas. The trade-off is added latency hops and more complex failover if an intermediate node fails.

The most interesting forward-looking challenge they discuss is WAL fan-out. As mentioned earlier, the primary streams Write-Ahead Log data to every single replica. At ~50 replicas, this works with large instance types and high-bandwidth networking, but it doesn’t scale indefinitely. Each additional replica adds more network and CPU pressure on the primary.

Their planned solution is cascading replication: intermediate replicas receive WAL from the primary and relay it to downstream replicas, forming a tree structure instead of a star topology. This would allow them to scale to potentially over 100 replicas without the primary becoming a network bottleneck.

There’s a trade-off here though. A star topology (primary → all replicas) is simple and has low latency, but the fan-out creates a bottleneck at the center. A tree topology distributes the fan-out load but adds latency hops and complicates failover. If an intermediate replica goes down, its downstream replicas lose their WAL source. OpenAI notes they’re still testing this with the Azure PostgreSQL team and won’t deploy it until failover is reliable.

The Results

To put some concrete numbers on the outcomes:

Millions of QPS across the cluster (combined reads and writes)

~50 replicas with near-zero replication lag

Low double-digit millisecond p99 client-side latency

Five-nines availability (99.999%)

One Sev0 in 12 months, which occurred during the viral launch of ChatGPT ImageGen when write traffic surged by more than 10x as over 100 million new users signed up within a week

That last data point is revealing. Even with all of these optimizations, a 10x write spike during a viral launch still overwhelmed the system. Writes remain the weak point, and OpenAI knows it.

All in All

A few things stand out to me from this post.

Boring technology works. PostgreSQL is 30+ years old. PgBouncer has been around since 2007. Read replicas are as old as relational databases themselves. None of this is new, and that’s the point. OpenAI isn’t succeeding because they have exotic infrastructure. They’re succeeding because they execute the fundamentals with discipline.

Access patterns determine architecture. The entire architecture is predicated on the workload being read-heavy. If ChatGPT’s workload were write-heavy, this whole approach would fall apart. Understanding your actual read/write ratio, your query distribution, and your hot paths isn’t just an optimization exercise. It determines your entire system design.

Operational discipline matters more than architectural cleverness. The post reads like a list of things they stopped doing: no new tables on PostgreSQL, no complex joins, no unthrottled backfills, no write-heavy workloads without migration plans. Scaling is as much about what you refuse to let into the system as it is about what you build.

Sharding is a last resort, not a first instinct. The distributed systems community sometimes treats sharding as the default answer to scaling problems. OpenAI’s experience shows that for many workloads, the complexity cost of sharding exceeds the benefit, and that you can go remarkably far with a single primary, read replicas, and careful write management.

In closing, I think the most valuable lesson here isn’t any single optimization technique. It’s the reminder that understanding your workload deeply, and making deliberate trade-offs based on that understanding, will take you further than reaching for complex distributed systems before you need them.

Have you dealt with similar scaling decisions at work? Did you shard too early, or wish you had sooner? I’d love to hear about it in the comments.

Source: Scaling PostgreSQL to Power 800 Million ChatGPT Users — OpenAI Engineering Blog